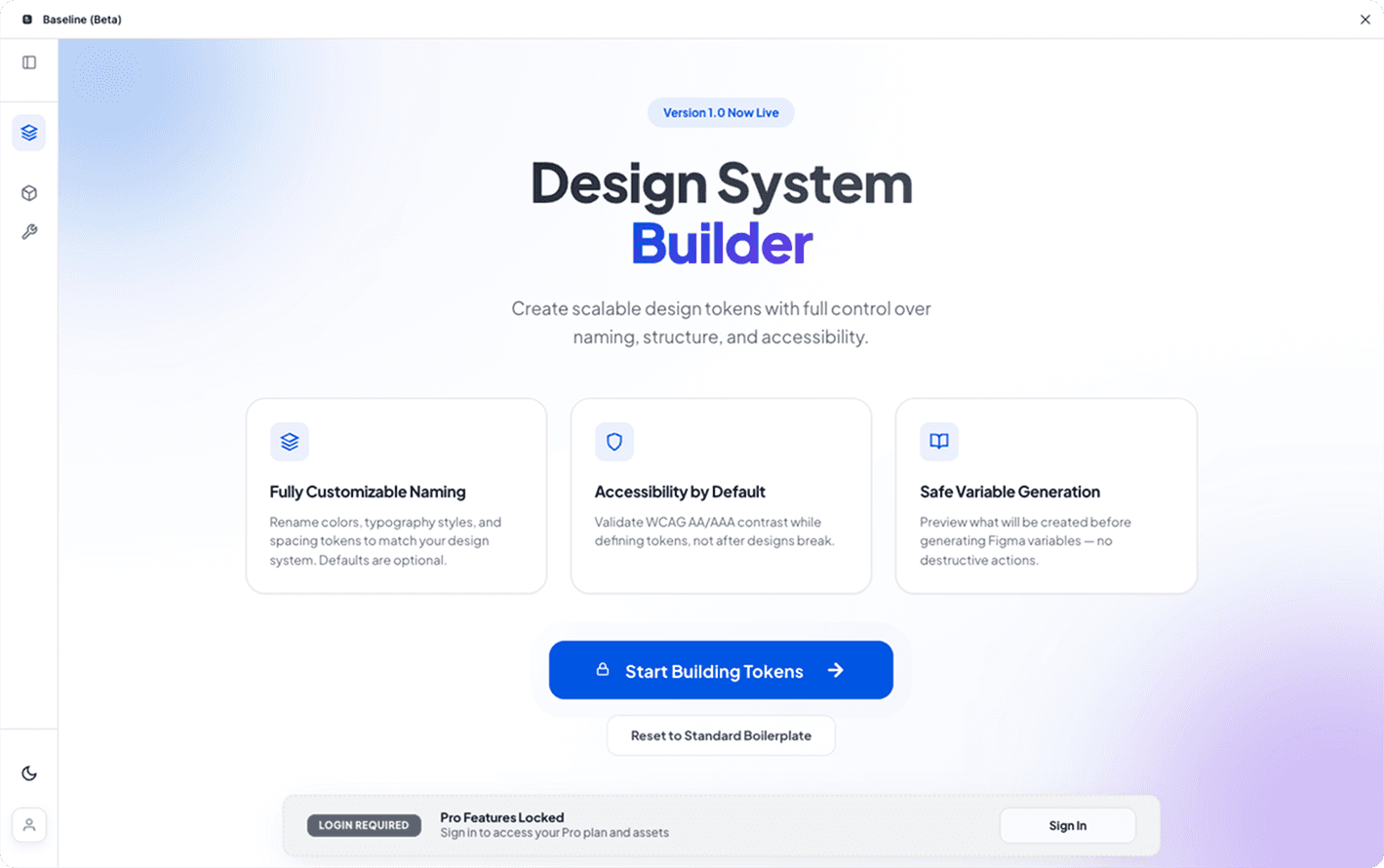

From System Creation to System Repair — Automated

How we reduced design system setup from 160 hours to 24 hours and maintenance from 7 hours/sprint to 15 minutes—with a self-healing repair engine.

Role

Product Engineer

Timeline

6 months

Platform

Figma Plugin

Impact

70% time reduction

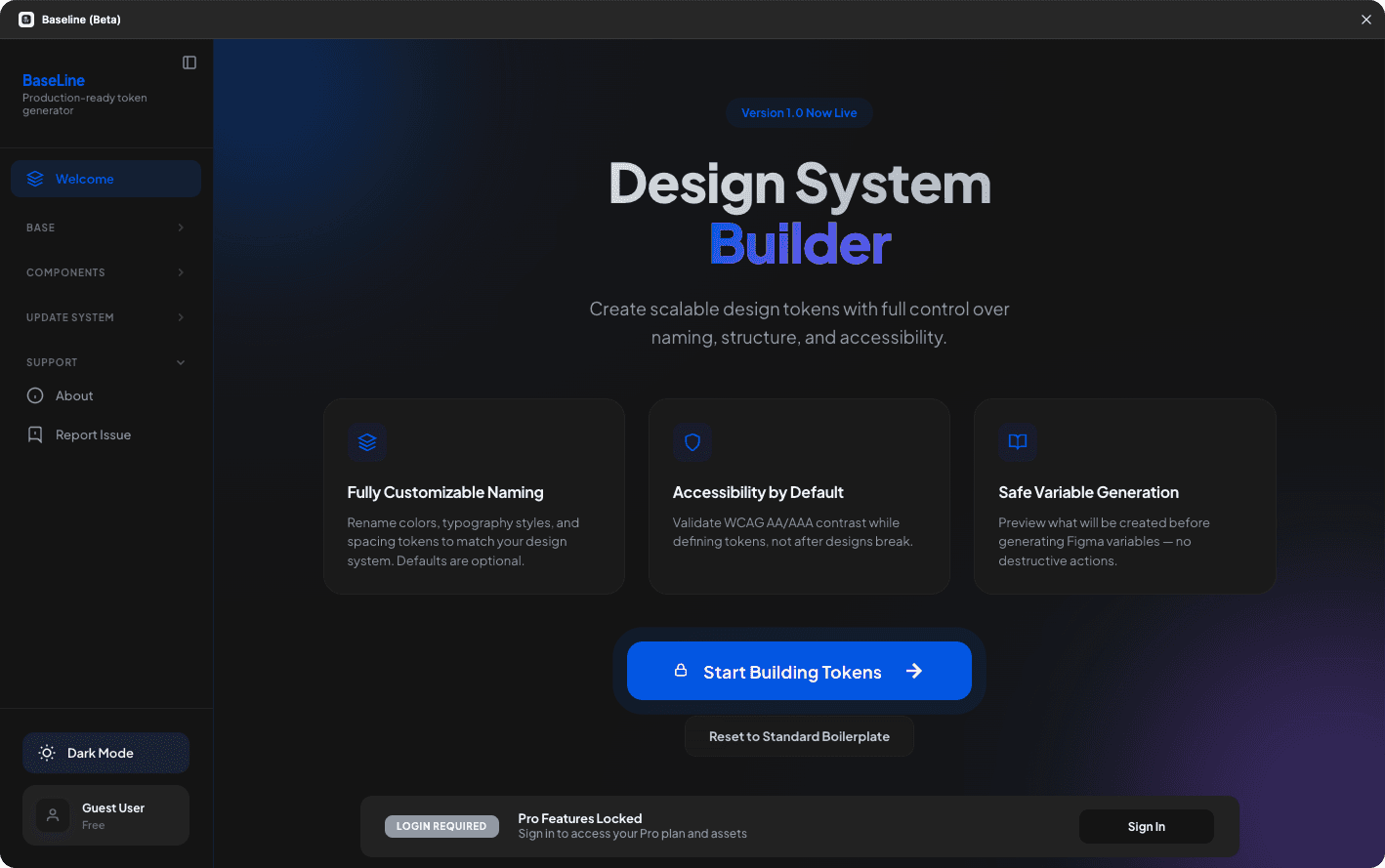

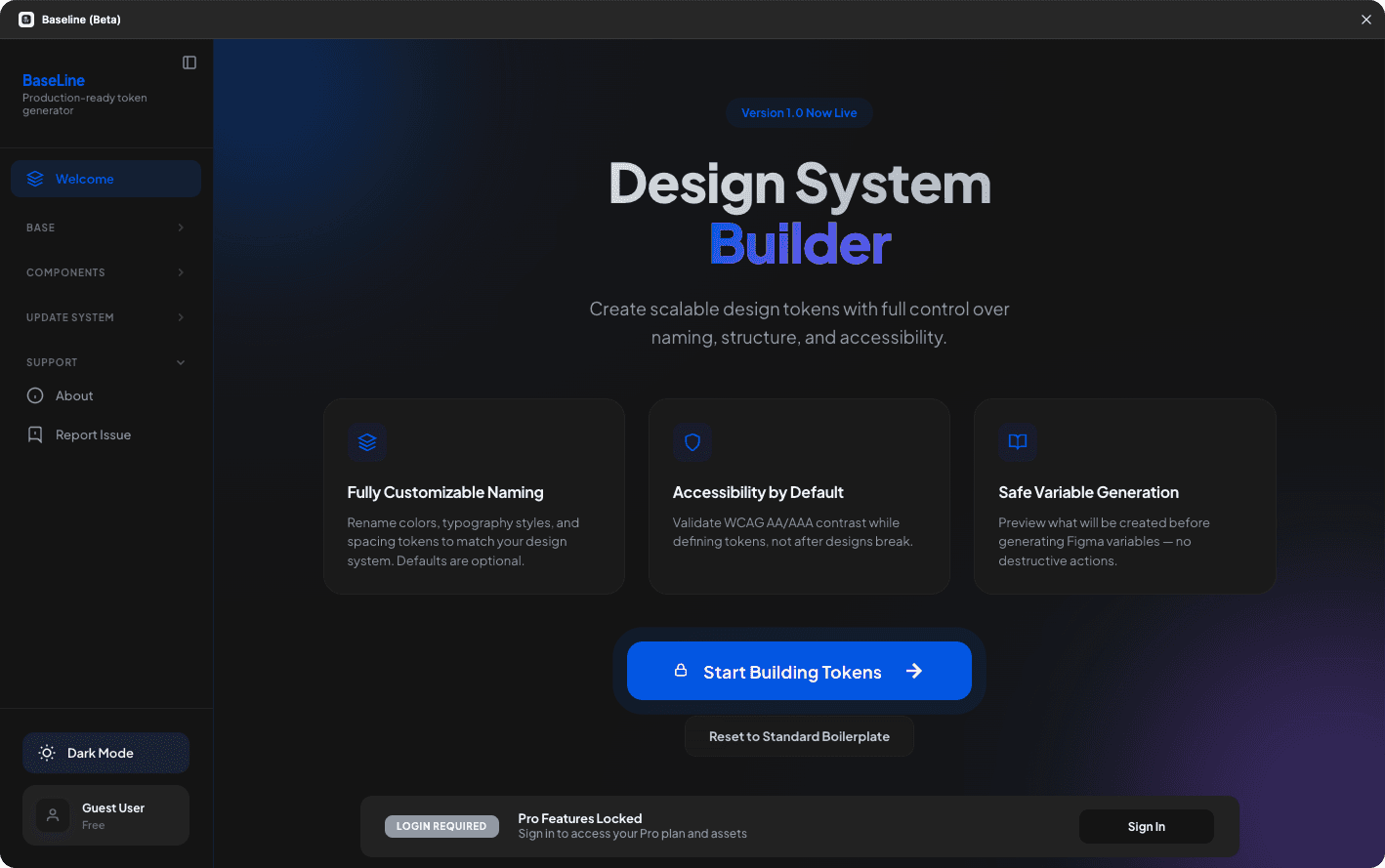

The project itself :

Context

Design systems break faster than teams can fix them

Context

Design systems break faster than teams can fix them

After working with 50+ design teams over 15 years, I observed a consistent pattern: teams spend 2–4 weeks setting up a design system, use it for 3–6 months, then it degrades into chaos. Variables become detached, naming drifts, styles orphan. The only solutions were starting over or manual audits taking 40+ hours.

Hours eliminated from manual system work

Reduction in repetitive system maintenance work

From 2–3 weeks → under 7 hours

Typical Issues Found After 6 Months (162 total)

Real data from auditing a Series B SaaS company's design system. 124 detached variables meant designers were manually overriding values instead of using the system.

All about the user :

Hypothesis

Maintenance is harder than creation—and more valuable to solve

Maintenance is harder than creation—and more valuable to solve

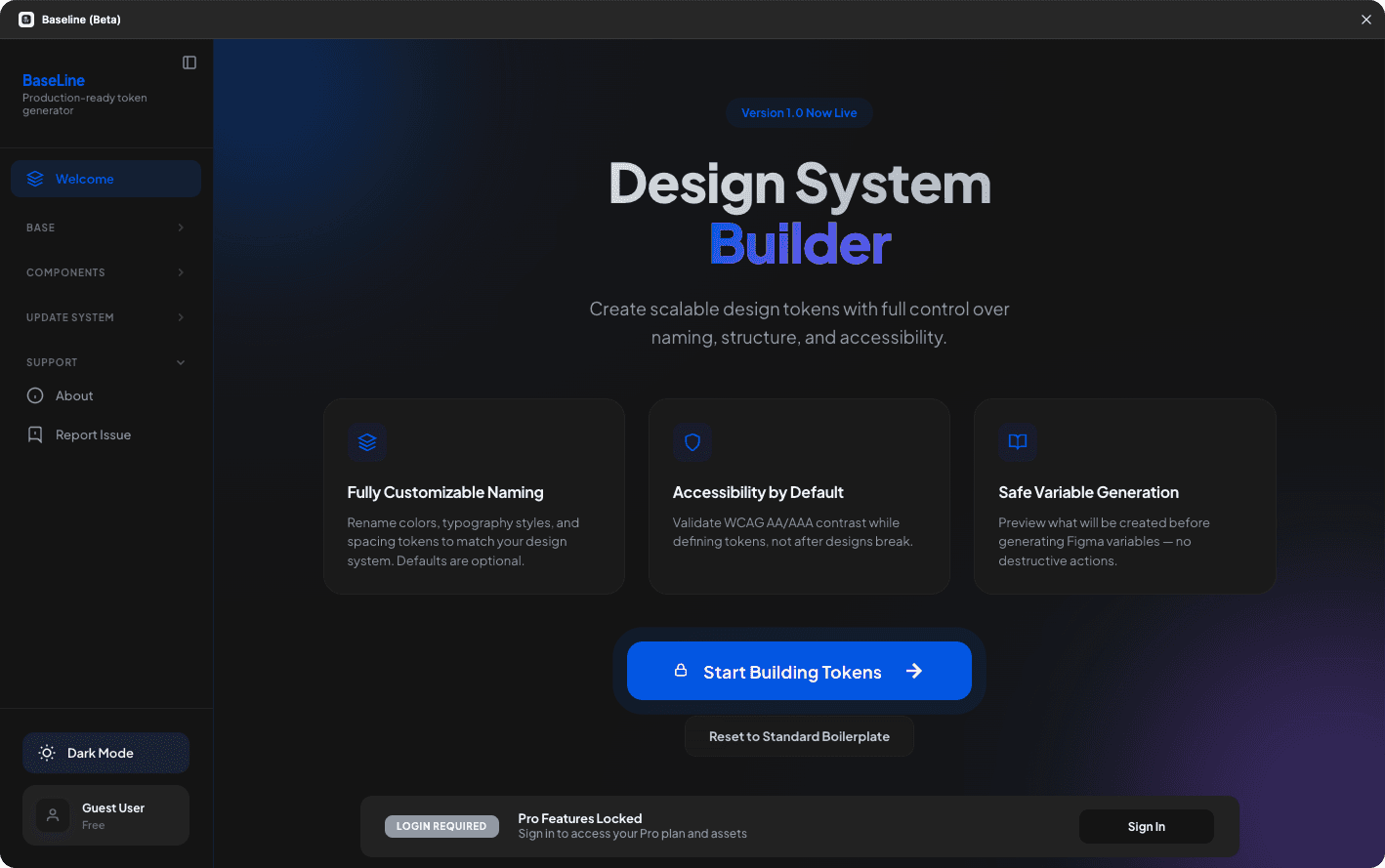

If we built a system that could detect and repair broken design systems automatically, teams could:

→ Recover legacy systems

Fix existing broken systems instead of rebuilding from scratch

→ Prevent future degradation

Run repair checks regularly to catch issues early

→ Enable faster setup

Generate systems knowing repair is available

→ Reduce manual work

Eliminate 40-hour manual audit cycles

The bet: Every tool focuses on creation (scaffolding, generation). No one solves the harder problem of systematic maintenance. If we could automate repair, we'd unlock a 10x time savings and enable teams that currently can't maintain systems.

The project itself :

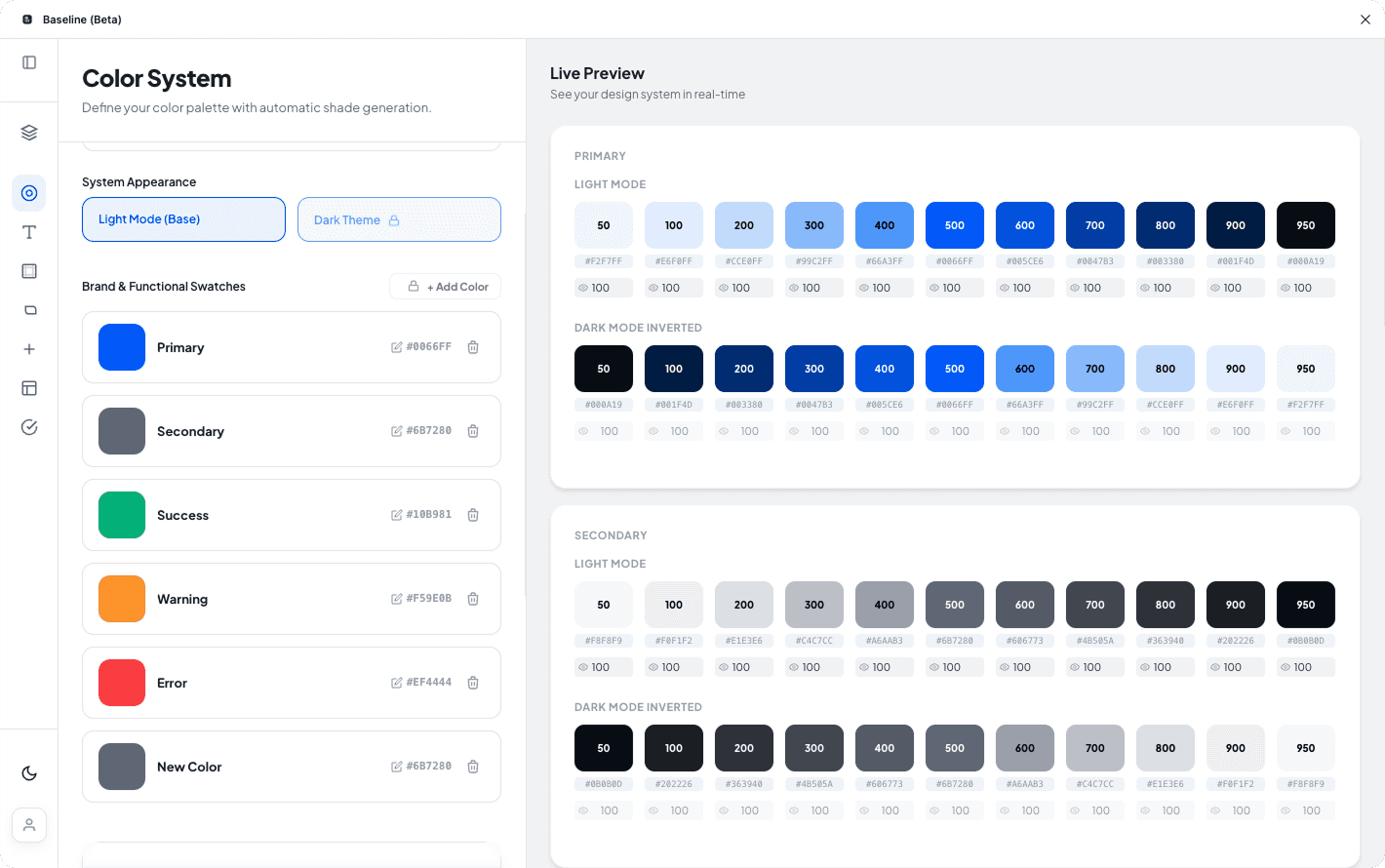

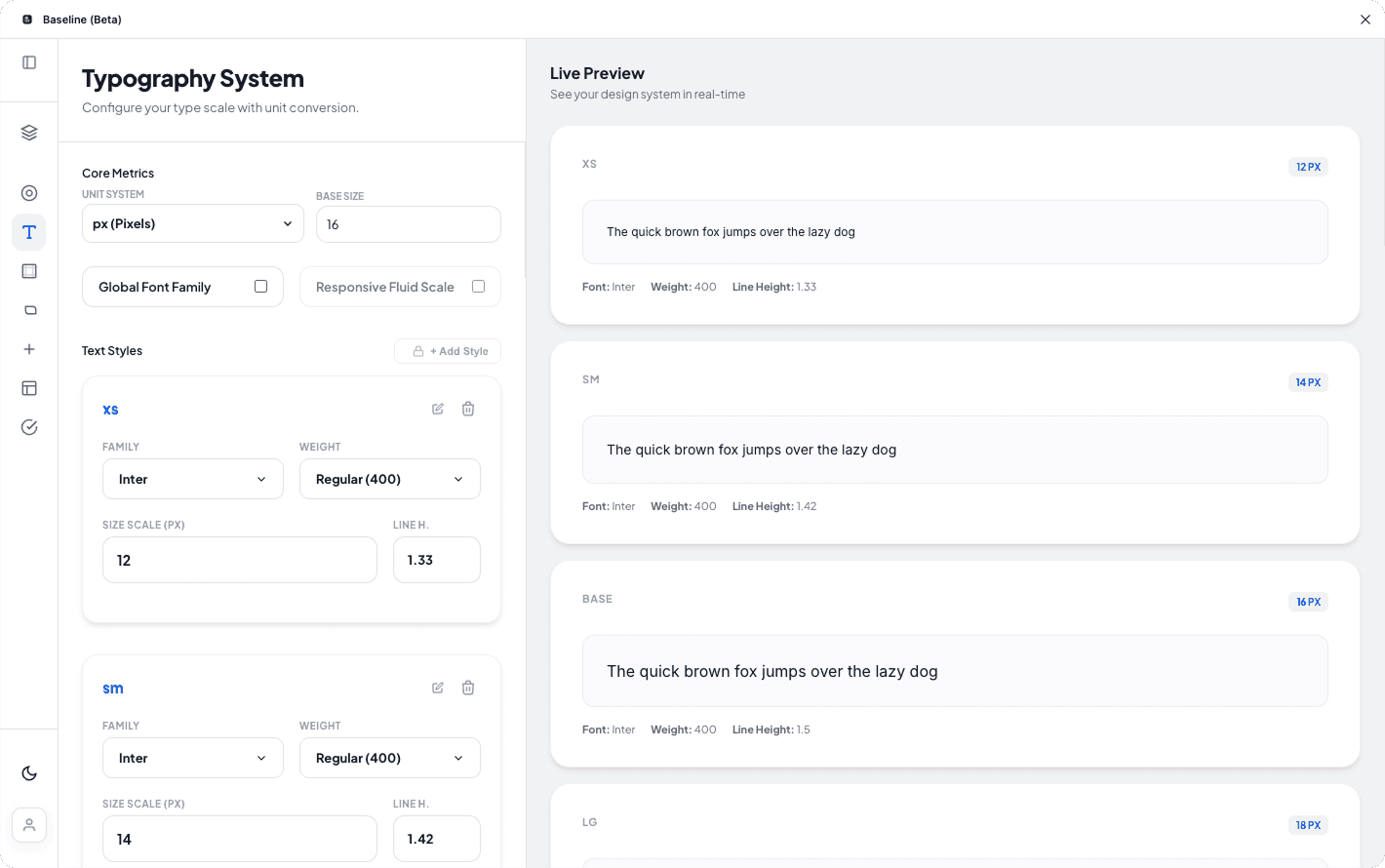

Core Solution

Designing a reliable way to convert interfaces between RTL and LTR without breaking layouts

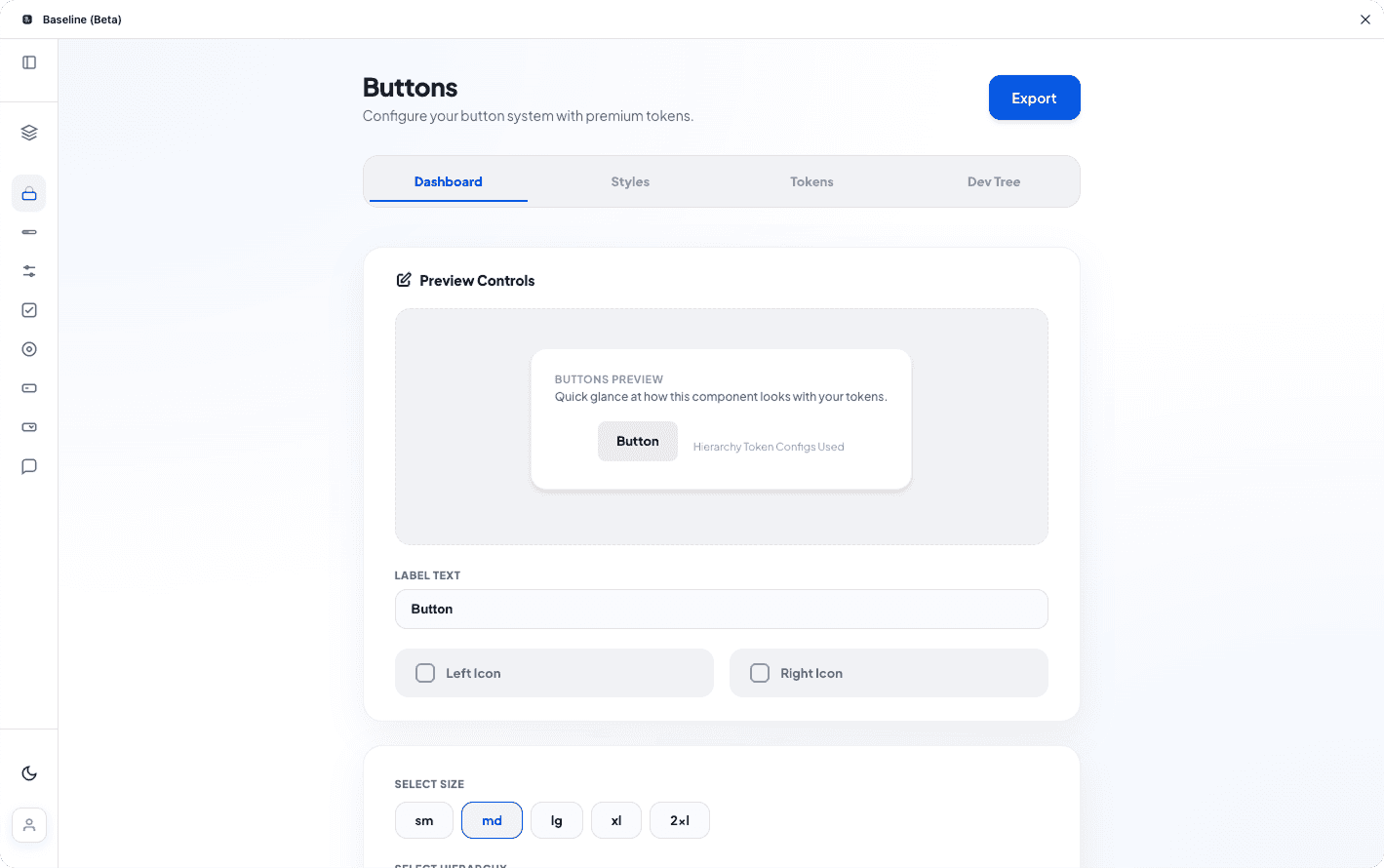

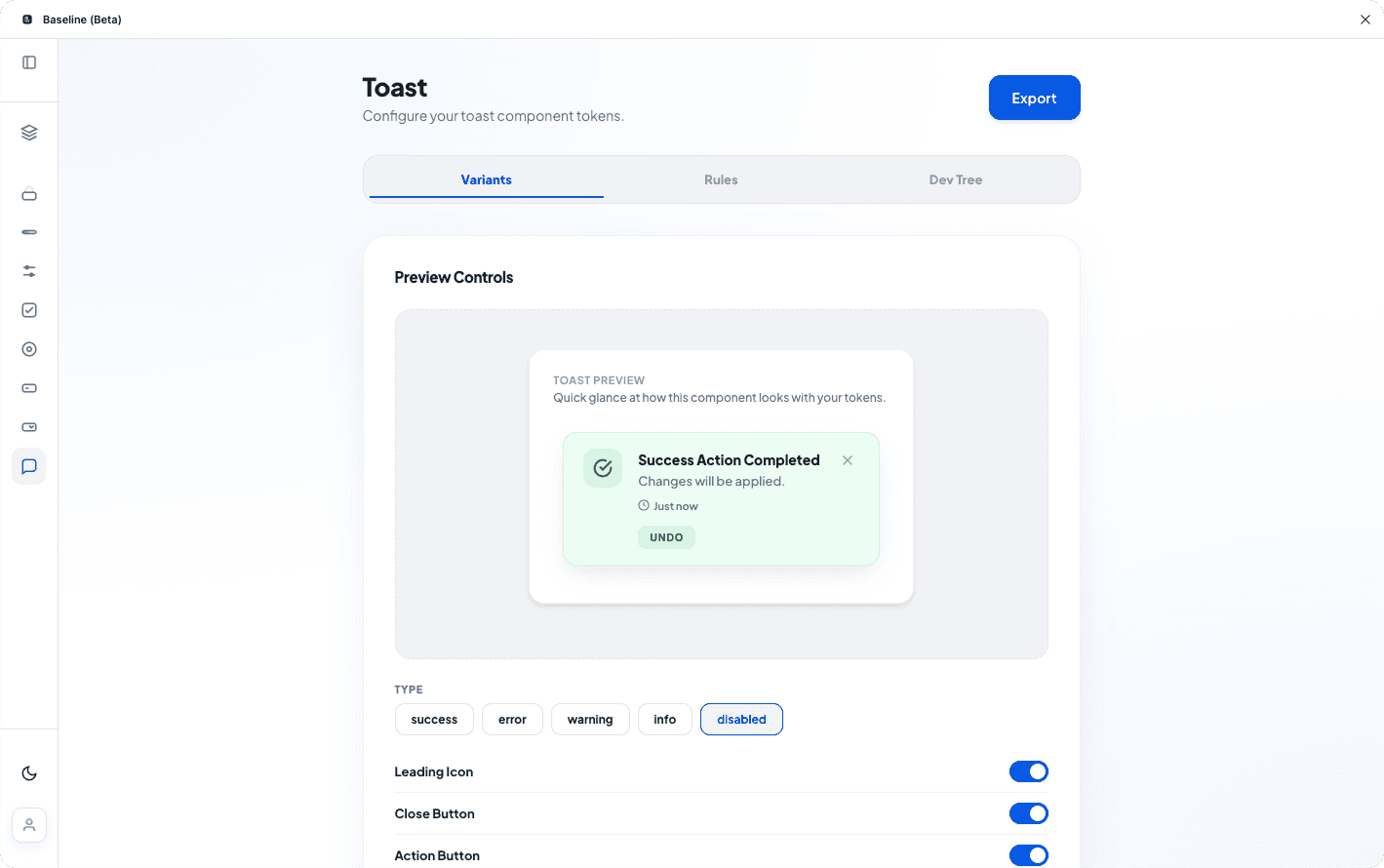

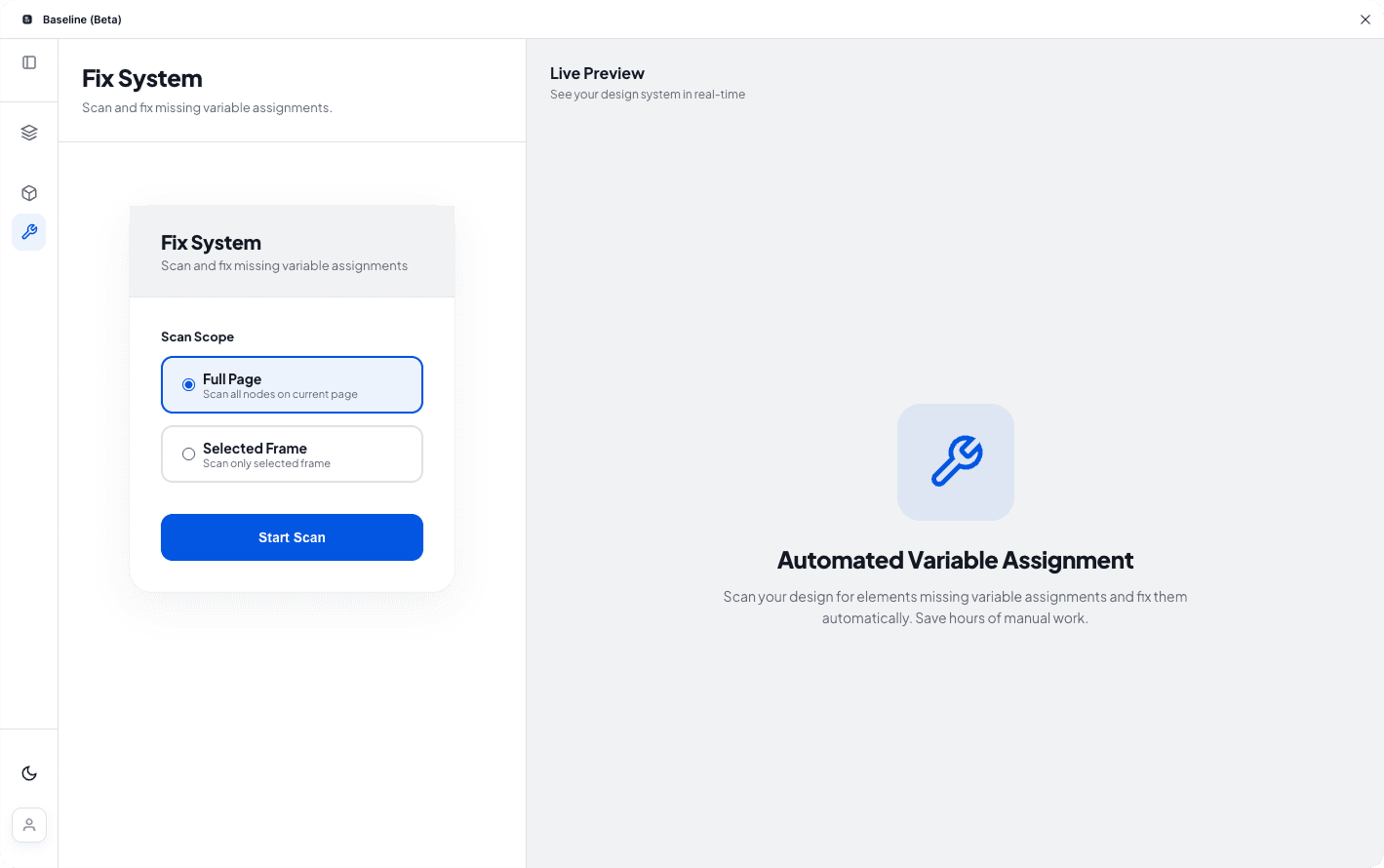

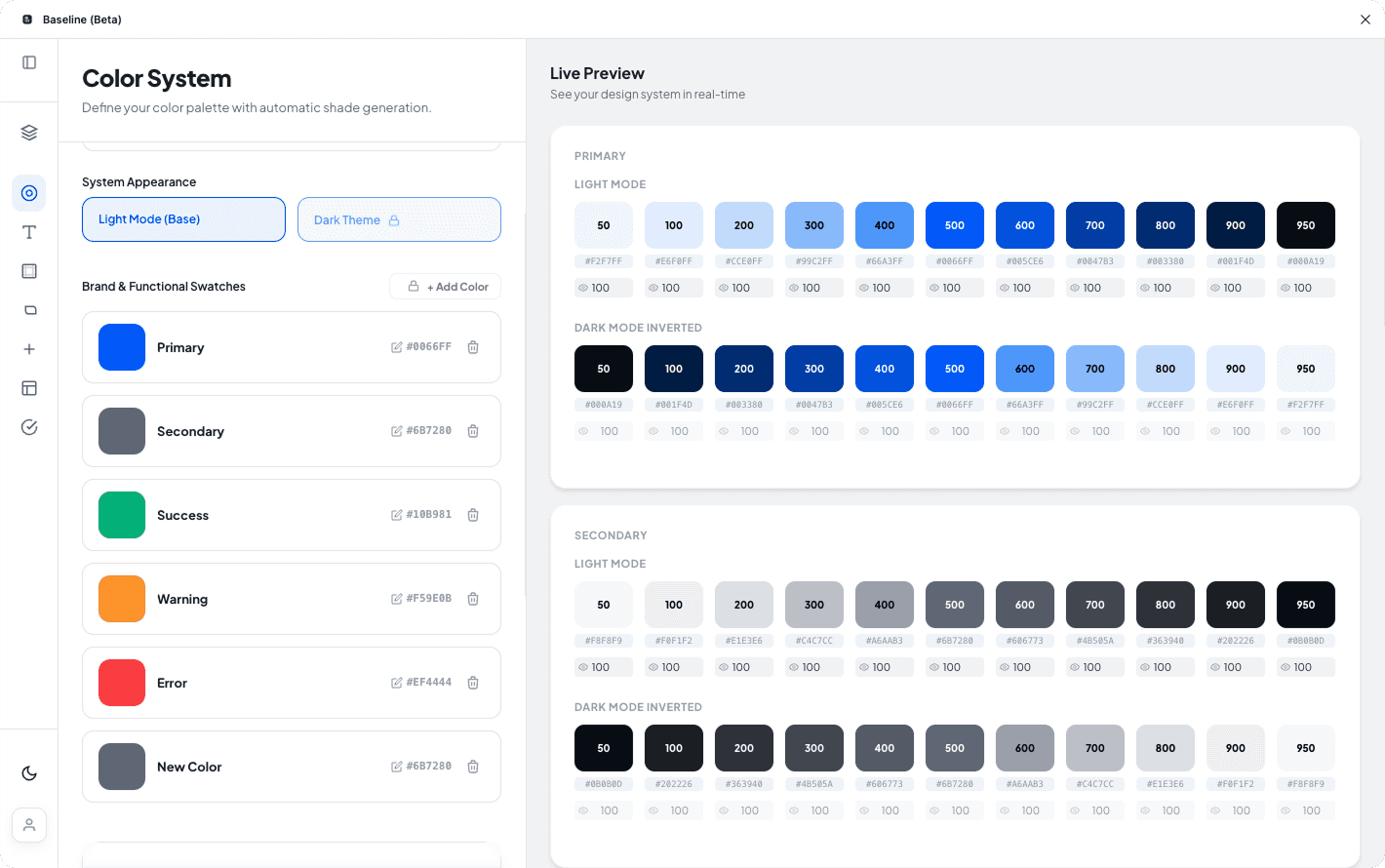

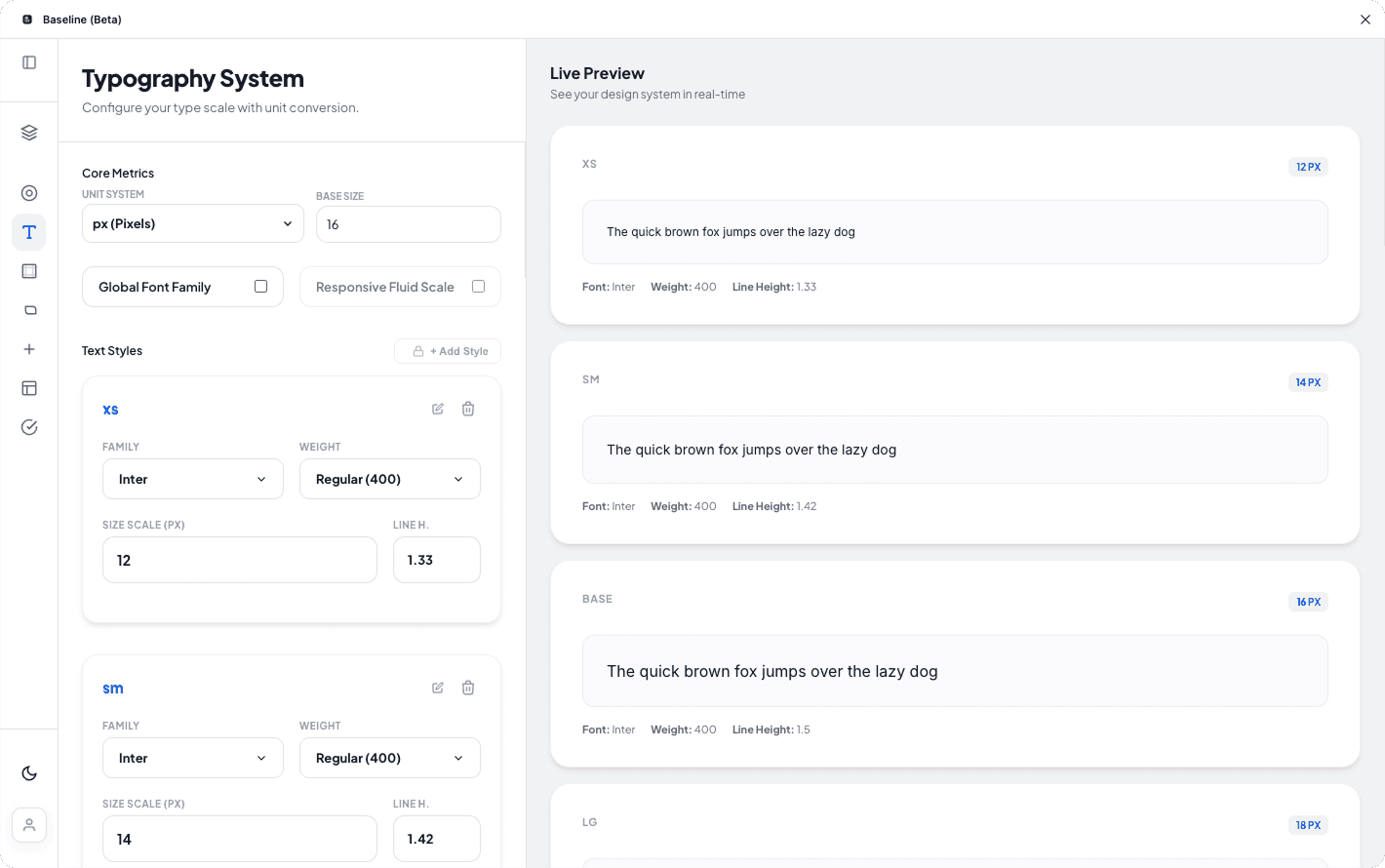

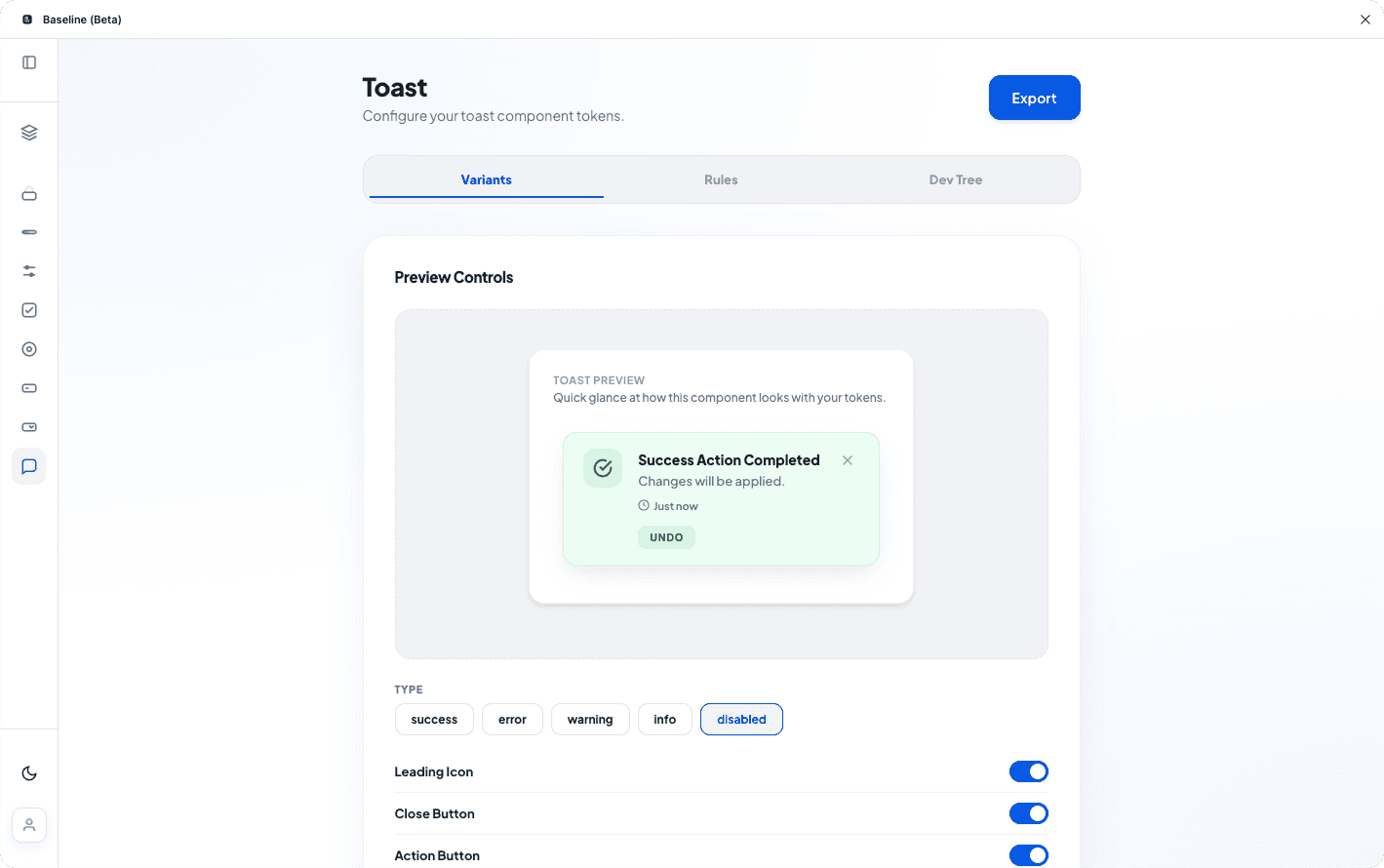

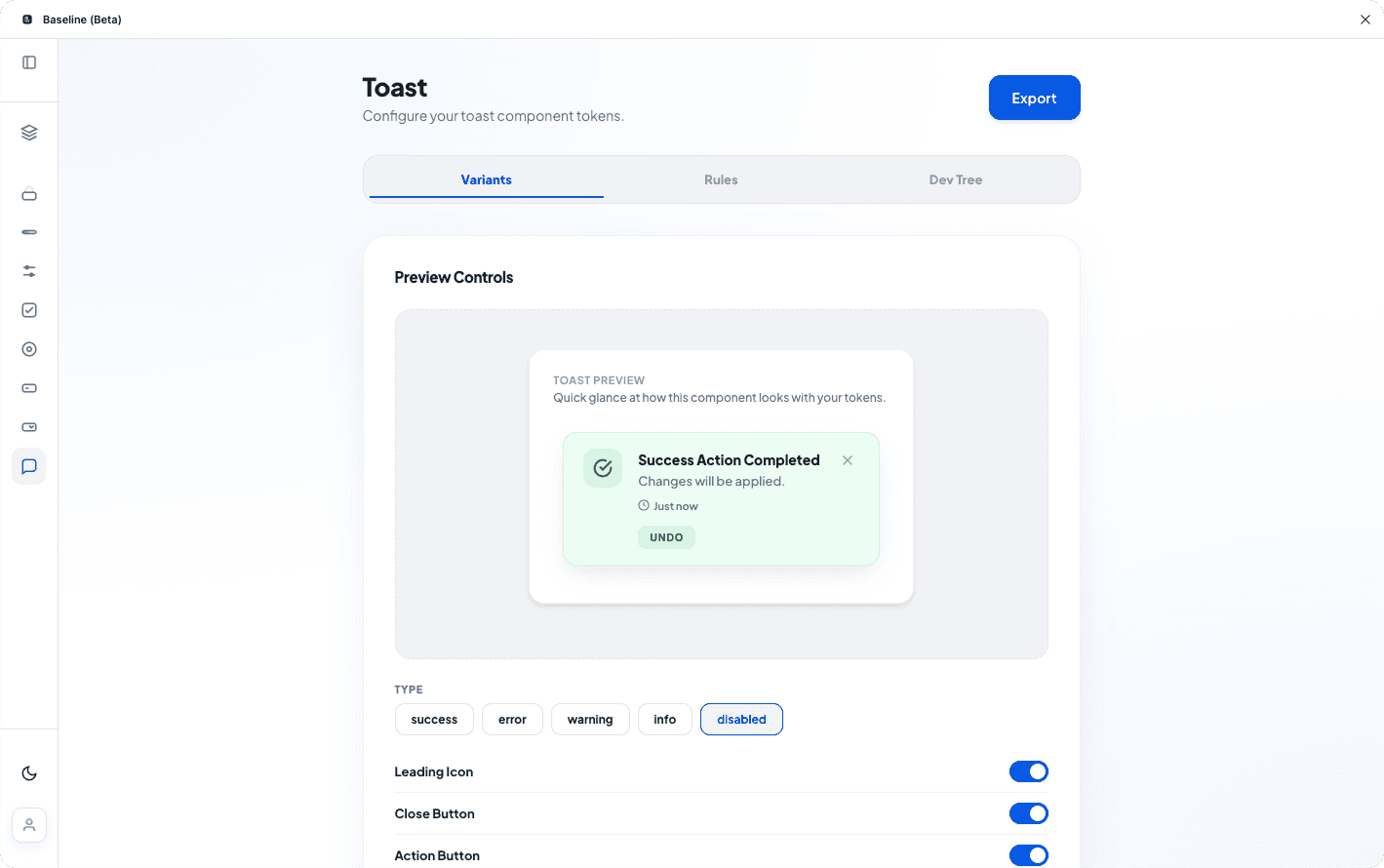

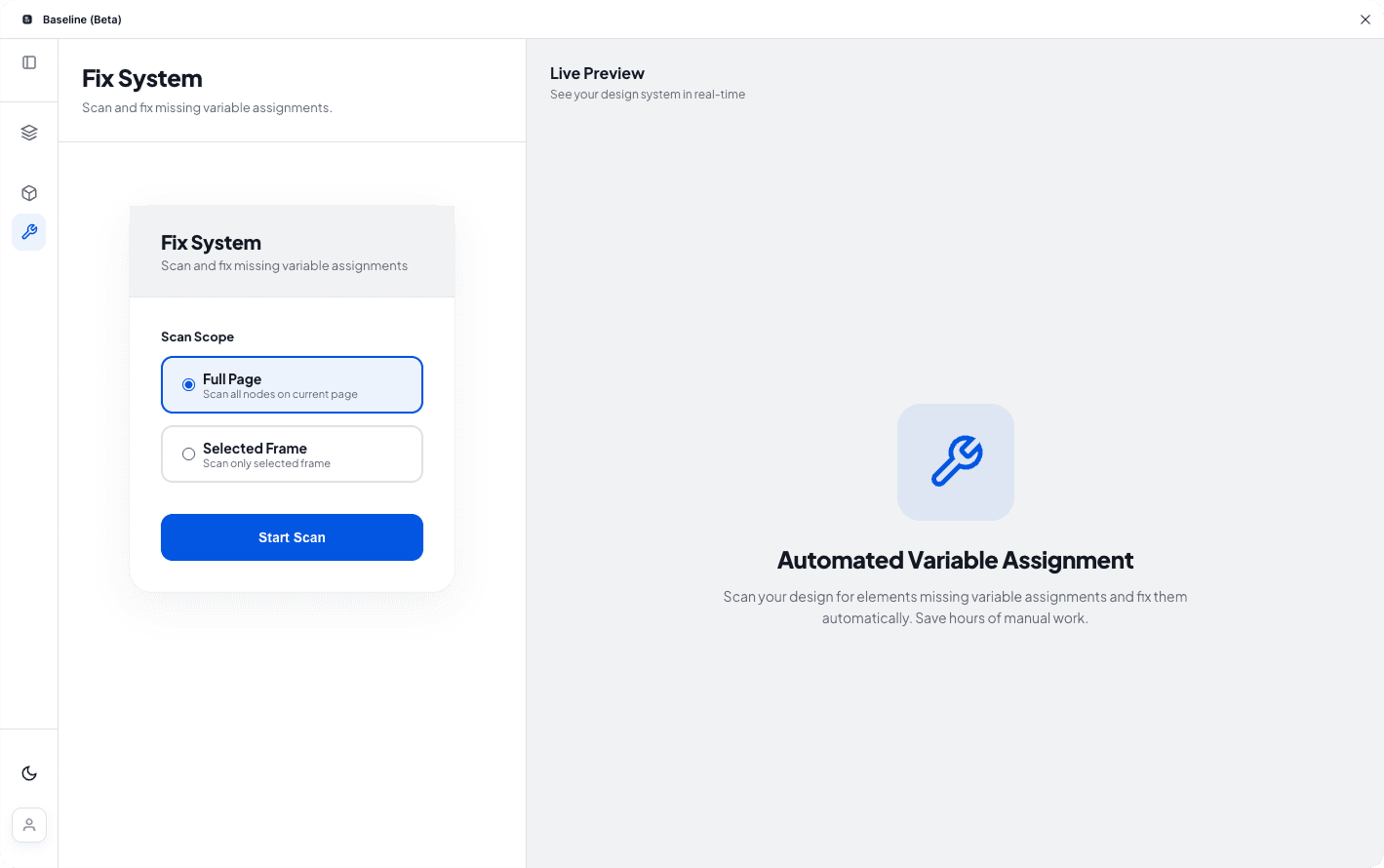

The Design System Repair Engine

The only tool that systematically detects and repairs broken Figma variables at scale

This isn't a "nice to have" feature—it's the core value proposition. Without automated repair, teams are stuck in an endless cycle of manual fixes.

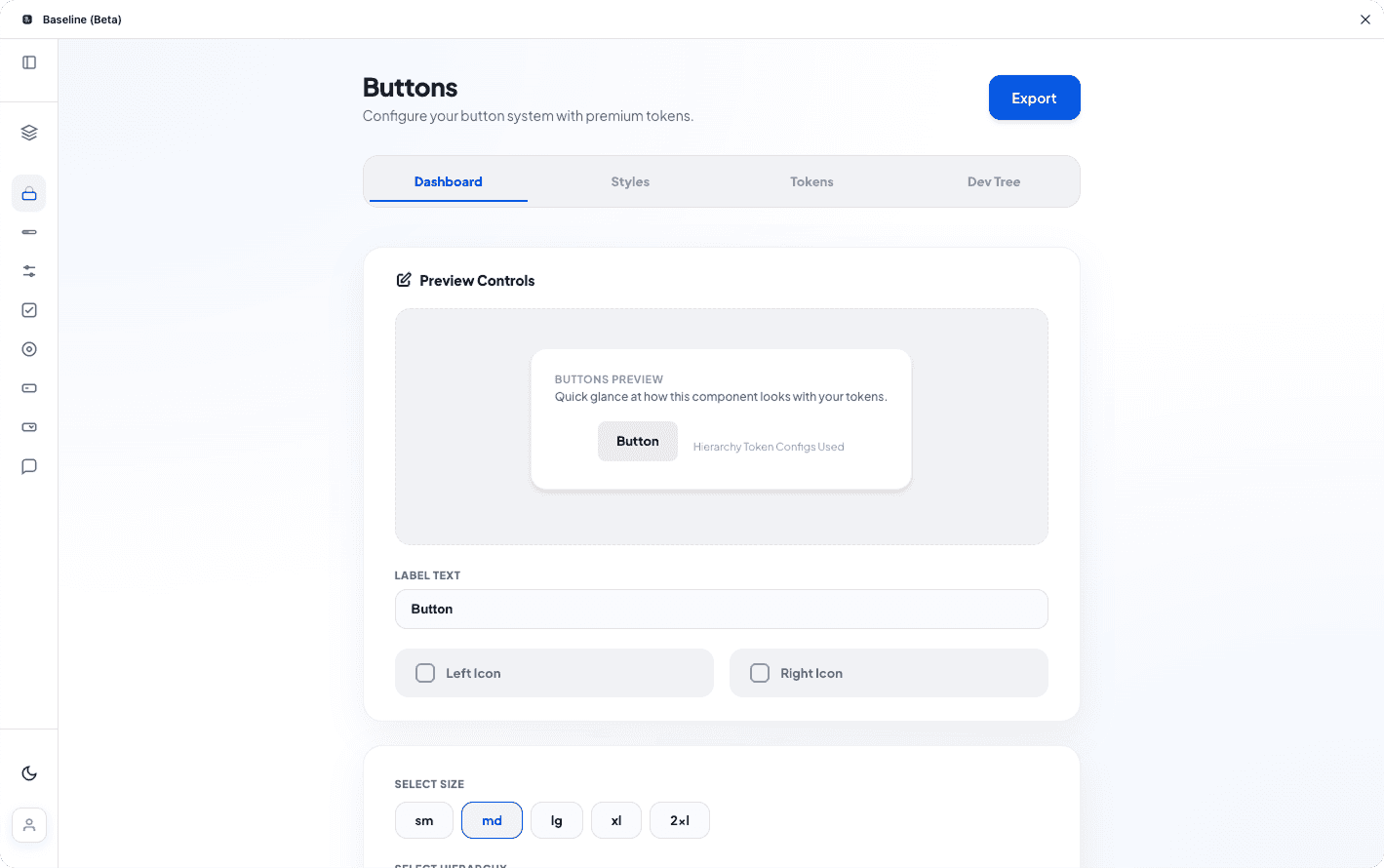

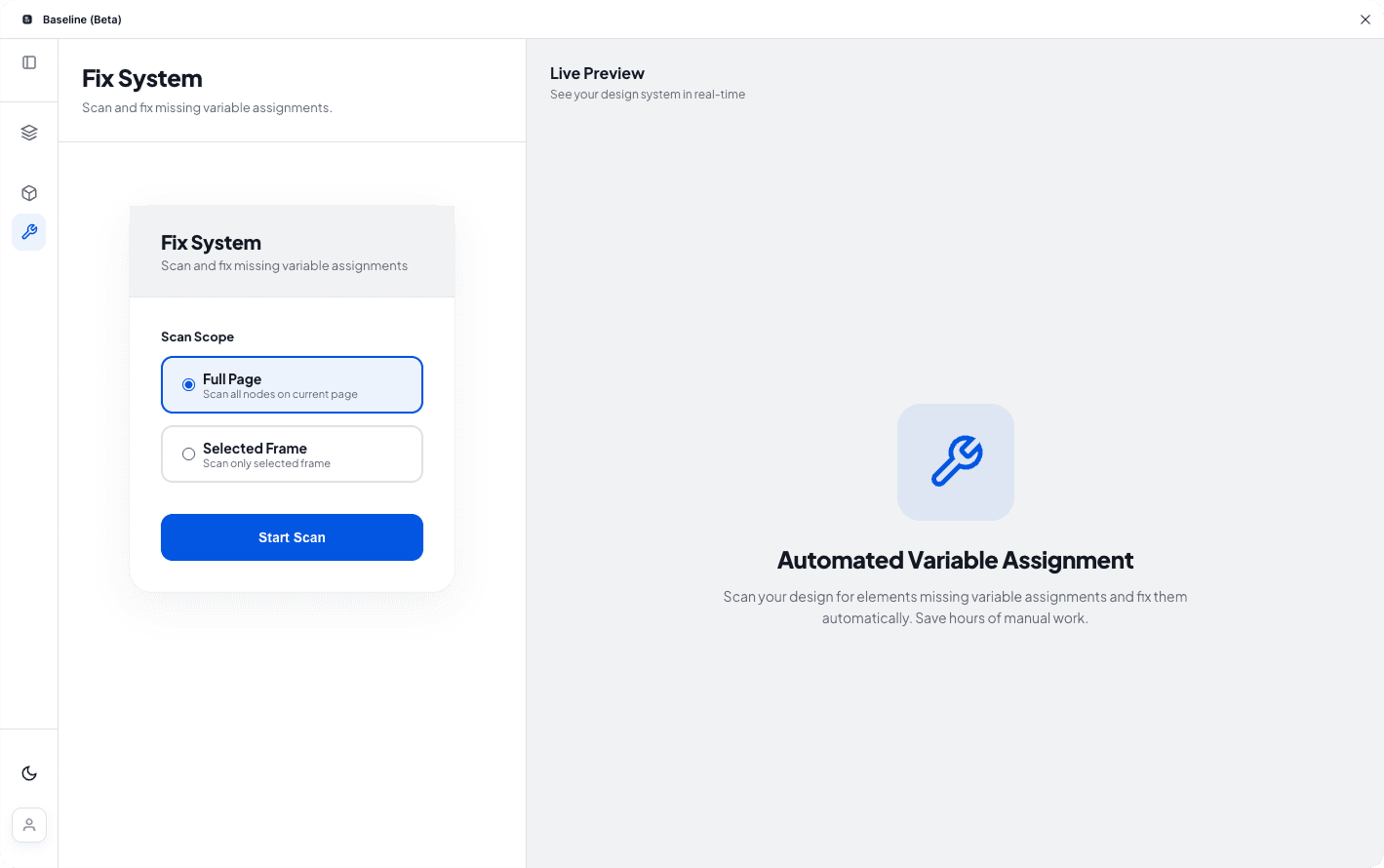

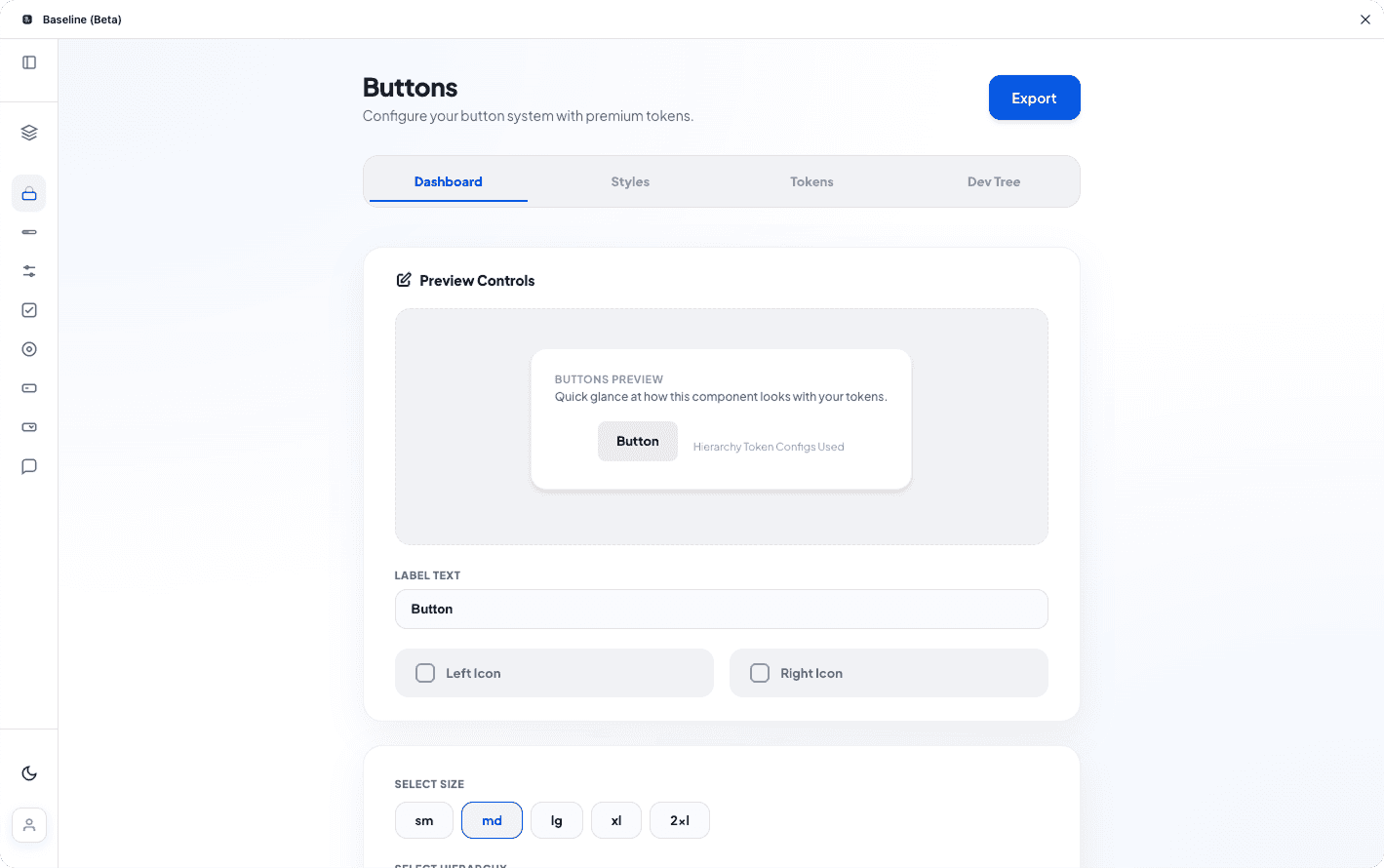

1. Deep File Scan (4.2s avg)

Traverse all pages, frames, and components. Build dependency graph. Match patterns against W3C token standards.

→ Scanned 247 components

→ Found 1,847 token references

→ Built dependency tree

2. Issue Detection

Classify problems: detached variables, naming violations, orphaned styles, missing references.

Detached vars

Naming issues

Orphaned

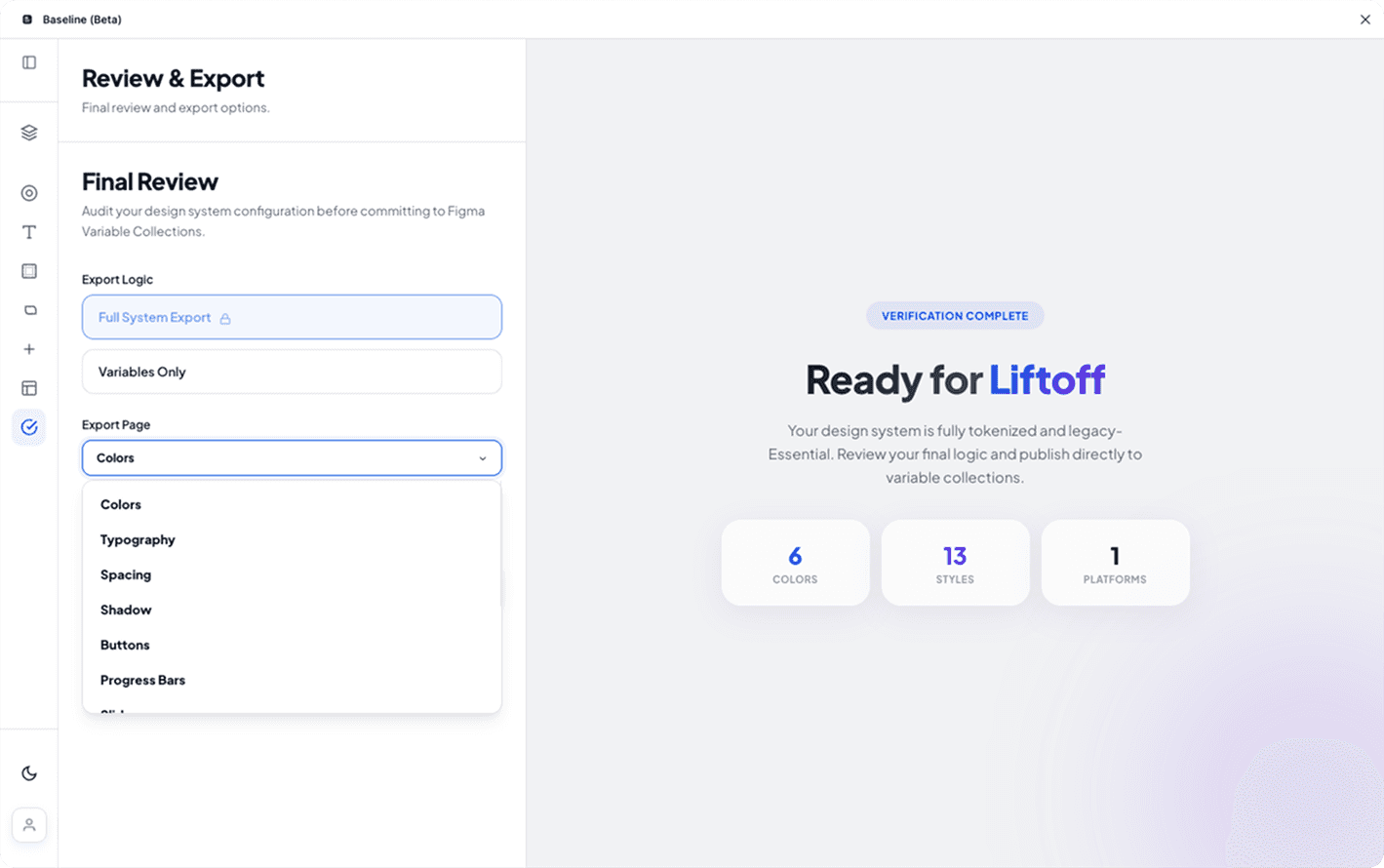

3. Preview Mode (Non-Destructive)

Show all proposed changes. User reviews and approves. This was critical for trust and adoption.

Design Decision: Early versions auto-applied fixes. Beta testers rejected it. Added preview mode → adoption increased 3x.

4. Automated Repair (3.1s avg)

Re-link variables, normalize naming, remove orphans, generate audit report.

97% success rate. 4 issues required manual review (complex nested dependencies).

All about the user :

Measured Impact

85% time reduction with quantifiable ROI

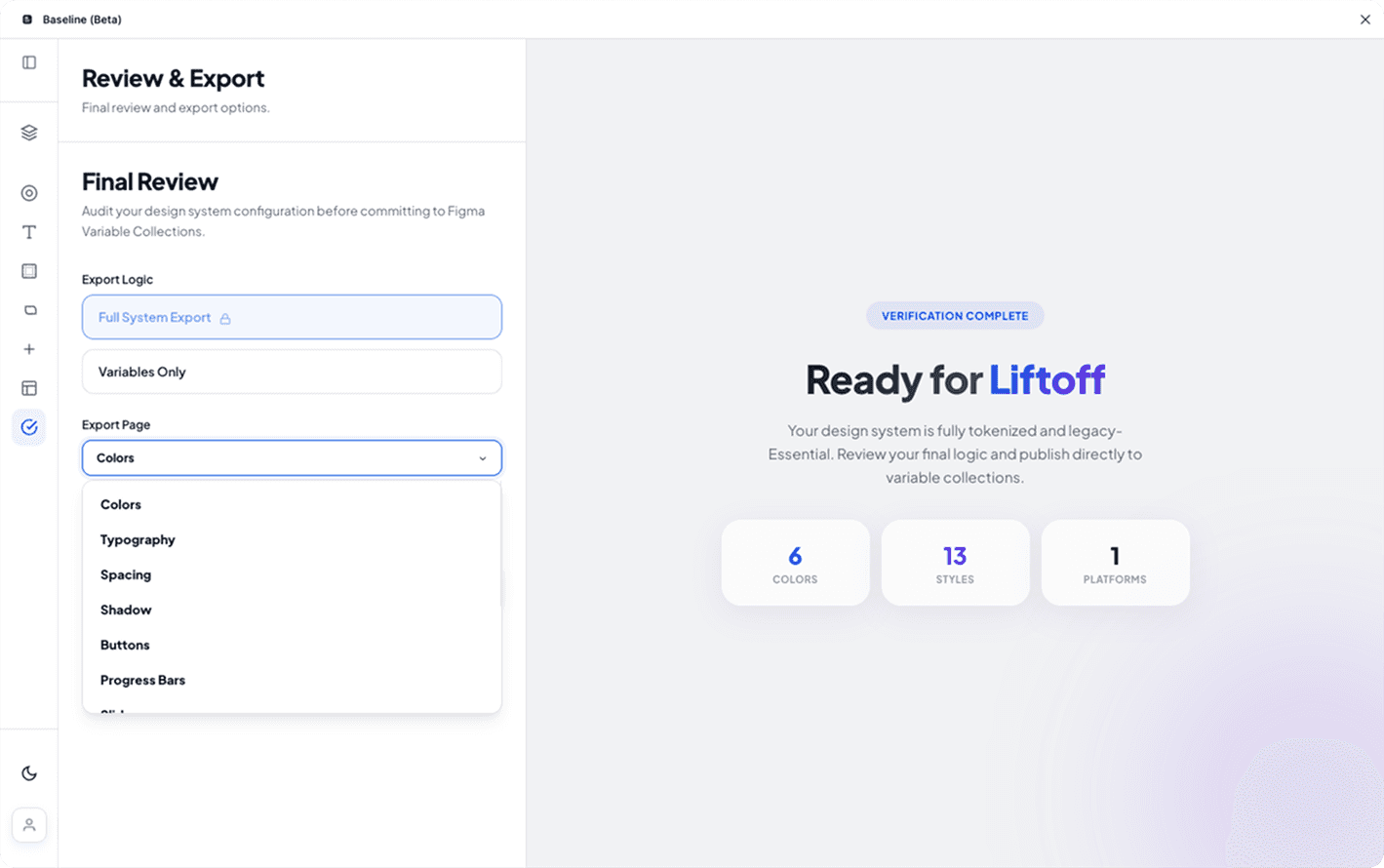

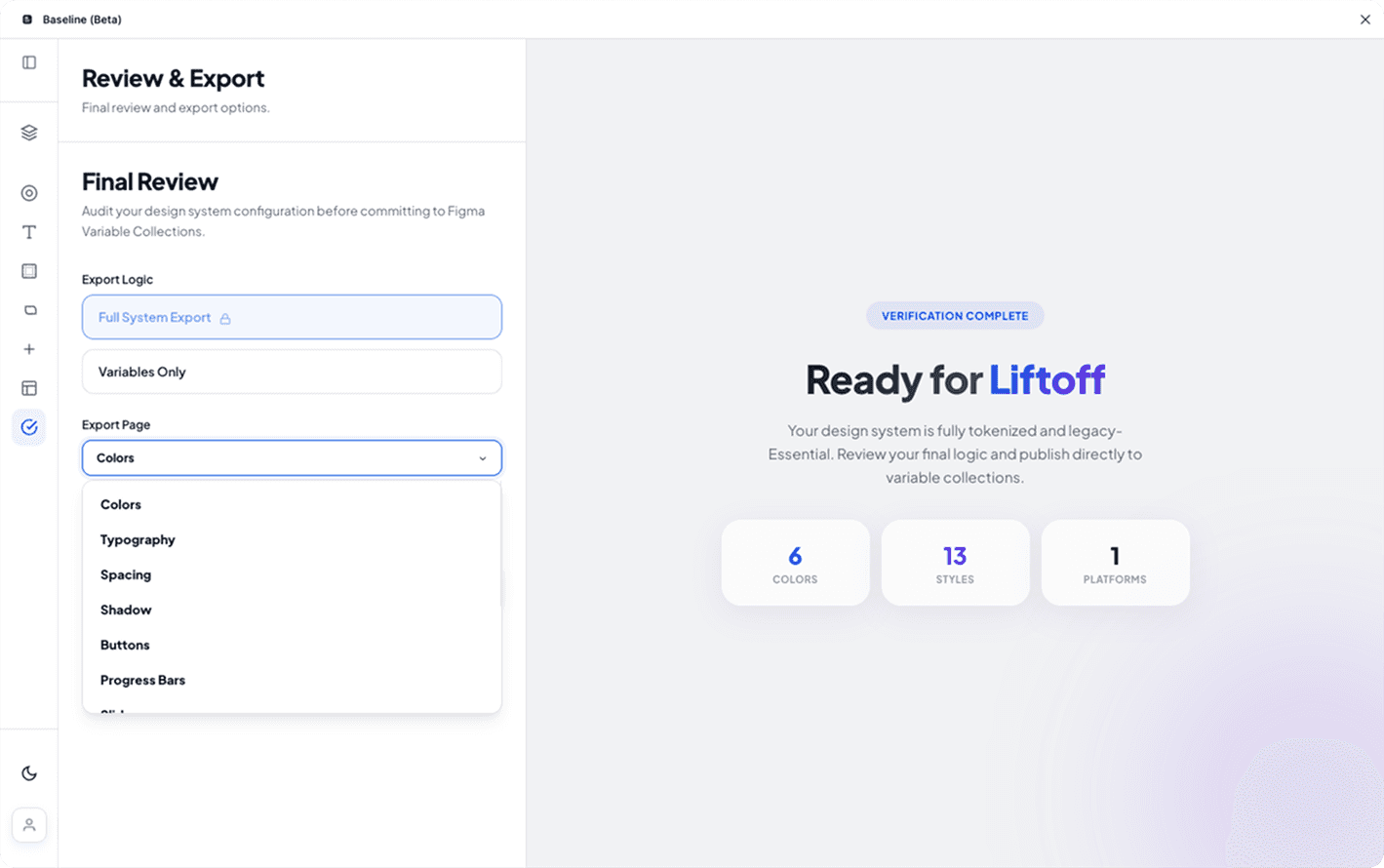

Setup Time: Manual vs. Baseline

Result: 136 hours saved per setup = $12,240 at $90/hr blended rate

Maintenance Hours Per Sprint (6-Sprint Comparison)

95% reduction in ongoing system maintenance (6.5 hrs → 0.25 hrs per sprint)

The clear version :

Key Decisions

What we built — and what we deliberately didn’t

✓ Built: Preview Mode (Non-Destructive)

Why: Early beta feedback showed designers wouldn't trust auto-fixes without seeing changes first. Adoption went from 30% to 90% after adding this.

Tradeoff: Added 2 weeks to dev timeline, but essential for trust.

✓ Built: W3C Token Standard Compliance

Why: Teams wanted predictable, dev-friendly token names for CSS variables. Enforcing kebab-case and semantic naming reduced handoff errors by ~70%.

Tradeoff: Less flexibility in naming, but teams valued consistency over customization.

✗ Cut: AI-Powered Suggestions

Why: Initially planned LLM-based token naming suggestions. Abandoned after testing showed it added complexity without clear value. Rule-based system was 10x faster and more predictable.

Learning: Not every problem needs AI. Deterministic systems are better for structured tasks.

✗ Cut: Continuous Monitoring

Why: Wanted real-time alerts when system health degraded. But Figma plugin API limitations and resource constraints made this infeasible for v1.

Future: Moved to v2 roadmap. Current workaround: teams run manual scans weekly.

The project schematically :

Validation

12 teams, 6 weeks, clear signal

Usage Metrics

Avg issues detected per scan

Successful variable reassignment across systems

Full system scan and repair completed in 4 seconds

Validated across 12 teams in 6 weeks

✓ Built: Preview Mode (Non-Destructive)

Why: Early beta feedback showed designers wouldn't trust auto-fixes without seeing changes first. Adoption went from 30% to 90% after adding this.

Tradeoff: Added 2 weeks to dev timeline, but essential for trust.

✓ Built: W3C Token Standard Compliance

Why: Teams wanted predictable, dev-friendly token names for CSS variables. Enforcing kebab-case and semantic naming reduced handoff errors by ~70%.

Tradeoff: Less flexibility in naming, but teams valued consistency over customization.

✗ Cut: AI-Powered Suggestions

Why: Initially planned LLM-based token naming suggestions. Abandoned after testing showed it added complexity without clear value. Rule-based system was 10x faster and more predictable.

Learning: Not every problem needs AI. Deterministic systems are better for structured tasks.

✗ Cut: Continuous Monitoring

Why: Wanted real-time alerts when system health degraded. But Figma plugin API limitations and resource constraints made this infeasible for v1.

Future: Moved to v2 roadmap. Current workaround: teams run manual scans weekly.

Qualitative Feedback

"Preview mode is genius. I trust it because I see what it's doing."

— Senior UX Designer, Krishaweb

The clear version :

Reflection

Three things I'd do differently

1. Start with repair, not generation

I initially built token generation first because it seemed like the "core feature." But beta users cared more about fixing their existing systems. I should have validated the repair engine first—it's the actual differentiator.

Lesson: Build the unique value prop first, even if it feels like a "secondary" feature.

2. Preview mode should have been v1, not v1.2

Users rejected auto-fixes immediately. Adding preview later required refactoring the entire repair flow. If I'd included it from day one based on trust concerns, would have saved 3 weeks of rework.

Lesson: When dealing with design files (people's work), trust > automation speed.

3. Measure time savings earlier

I had anecdotal "this saves time" feedback but didn't quantify it until week 4. The 85% time reduction stat became our strongest marketing message. Should have instrumented this from beta day 1.

Lesson: Quantify value early. It clarifies roadmap priorities and selling points.

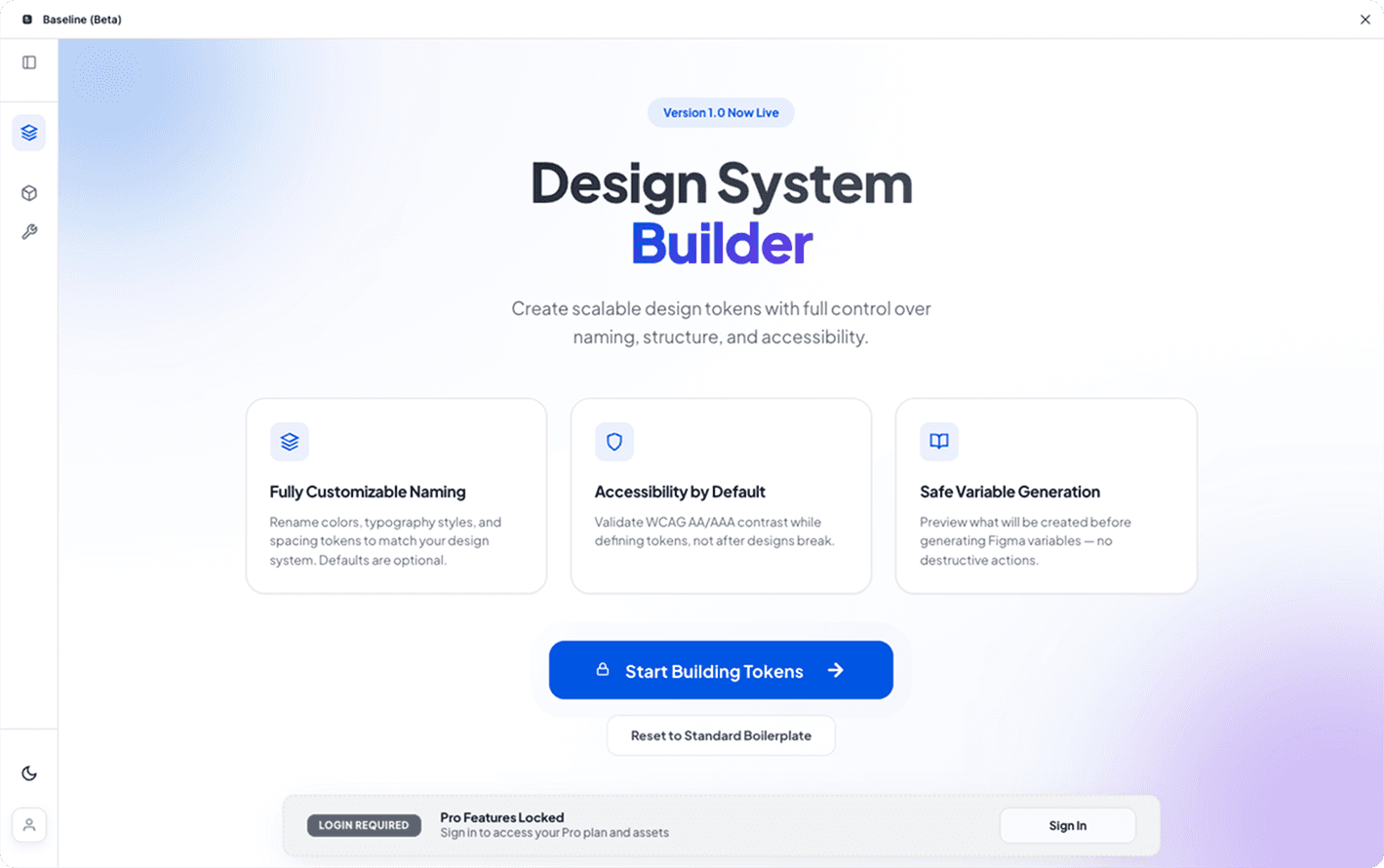

Validated product-market fit in maintenance tooling

Baseline proved that design system maintenance is a bigger pain point than creation. By focusing on the repair engine as the core differentiator, we:

→ 25+ figma community users

→ Reduced setup time by 85% (160 hrs → 24 hrs)

→ Achieved 90% adoption rate among beta teams

→ Cut maintenance from 7 hrs/sprint to 15 minutes (95% reduction)